At CHAI’s Leadership Summit in Dana Point, California, Trustible CEO Gerald Kierce led a working workshop alongside Melissa Fitzgerald (Chief Privacy Officer at Mass General Brigham), Shawn Stapleton, PhD (Director and Head of AI Lifecycle Management at UT MD Anderson), and a representative from a large managed care provider. The session, “How AI Governance Drives AI Outcomes,” skipped the fundamentals of AI governance and instead focused on how to scale Agentic AI risk management and AI benefits measurement.

Scenario One: The Agent Nobody Approved

The first example represented a real-world scenario currently occurring inside of healthcare. An AI agent has been running in a clinical department for six weeks. It schedules follow-up appointments, routes care escalations, and sends patient messages without human review of each action. No governance intake was ever filed. Leadership found out today.

Before getting to governance responses, the workshop established a distinction the field uses inconsistently. Agentic AI is human-triggered: a person initiates the task, the AI executes it, and a human reviews the output. AI agents are independently triggered and autonomously executed, setting their own sub-tasks with minimal real-time oversight. That difference in trigger mechanism changes who’s accountable, what intake processes need to capture, and where oversight mechanisms have to live.

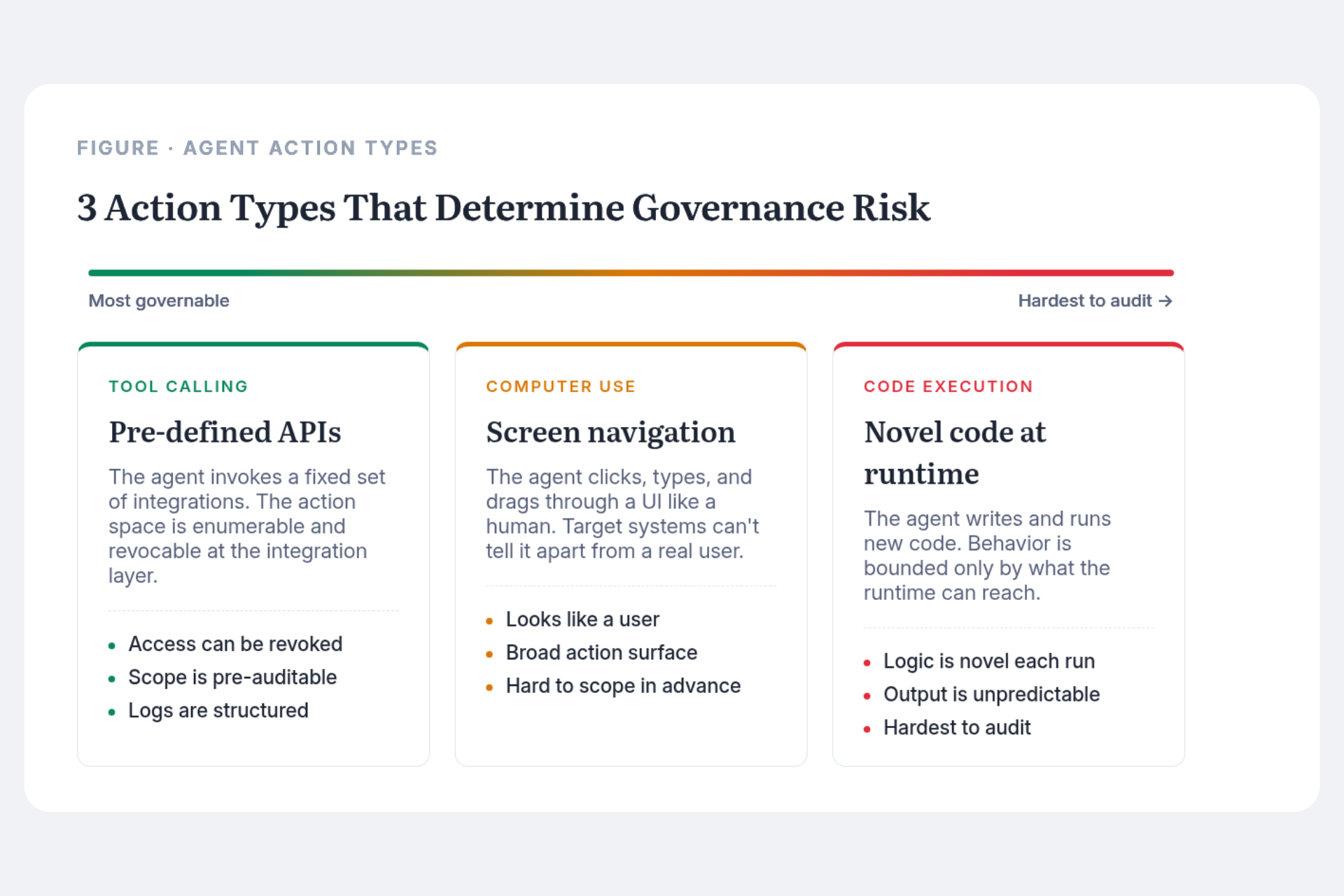

Three action types define how agents operate. Tool calling is the most governable: the agent calls pre-defined APIs, the action space is bounded, and access can be revoked quickly. Computer use is broader: the agent navigates screens like a human, and from the target system’s perspective, its actions are often indistinguishable from a legitimate user. Code generation and execution is the hardest to audit: the agent writes and runs novel code, with a blast radius bounded only by the runtime environment.

Most intake processes weren’t designed to distinguish between these. And most governance programs can’t detect unauthorized agent activity until after it’s already happened.

Shadow AI in healthcare is usually framed as a network security problem: catching unauthorized tool use before it touches sensitive systems. In practice, it’s just as often a scope creep problem. A tool gets approved for one purpose and quietly expands into adjacent workflows. A vendor adds an agentic feature to a product already in production. None of these events trigger a new intake submission or a policy alert. The approved use case sits unchanged in the inventory while the actual use has moved well beyond what anyone assessed.

An active contract gap compounds this. When a vendor embeds an AI capability into a product after signing, most agreements weren’t written to address it. Disclosure obligations are vague. Termination provisions weren’t designed for “vendor added a model we never reviewed.” Health systems are navigating this without clear answers, and it deserves explicit attention in every contract renewal cycle.

Scenario Two: The Portfolio Review Your CFO Didn’t Know You Could Answer

The second scenario started with a CFO request: which of our 35 AI initiatives in flight are delivering measurable value, for which stakeholders, and does that justify the risk and operational cost?

AI governance programs that capture only risk at intake are leaving the most strategically important data on the table. Expected benefits, who captures them, at what magnitude, and whether they materialized post-deployment, belong in the same system as the risk assessment.

Benefits in healthcare aren’t a single score. They’re a matrix. A clinical decision support tool might reduce physician cognitive load, improve patient outcomes, lower administrative cost, and strengthen competitive position simultaneously. Those are four distinct benefit categories captured by four different stakeholder groups. Collapsing them loses the information needed to prioritize and defend a portfolio. A researcher at an academic medical center using sensitive patient data to advance diagnostics may carry meaningful privacy exposure, but the downstream value to clinical knowledge and future patients compounds over time. Governance programs that can only see the risk side of that assessment will systematically under-approve high-value research use cases.

The framework presented in the workshop placed risk and benefit on the same canvas: high benefit and low risk get approved and accelerated; high benefit and high risk get conditional approval with rigorous mitigation; low benefit and high risk don’t get pursued. Most AI governance programs don’t have the data infrastructure to apply this consistently, because benefit capture wasn’t built into intake design from the start.

The Monitoring Gap

Post-deployment monitoring surfaced as one of the session’s most contested topics, not because organizations aren’t doing it, but because nobody agrees on what it means. Trustible has acknowledged this challenge and written a recent white paper on the various AI monitoring activities every organization needs to put in place. Internal monitoring, tracking model behavior, performance drift, and outcome alignment after deployment, is inconsistently defined across clinical, data science, and compliance teams. External monitoring, what vendors are doing and what they’re obligated to disclose, is even more variable.

Point-in-time governance can’t keep pace with how fast AI capabilities and regulatory requirements move. A model approved 18 months ago may be operating in a substantially different regulatory environment today. A vendor’s system may have been materially updated without triggering a contract clause. The use case’s risk profile may have shifted because the underlying data population changed. Continuous signal, not periodic review windows, is what governance programs need to stay current. Most health systems have defined triggers for reassessment in theory. The detection mechanisms to catch a trigger event in practice remain underdeveloped.

The Broader Point

The AI governance programs that will define institutional AI capability over the next five years aren’t the ones that say no most efficiently. They’re the ones that can say yes to the right things, fast, with defensible evidence behind every approval.

That requires capturing benefits with the same rigor applied to risk. Intake processes that account for agents, not just models. Contract terms that address AI disclosure and material change. And frameworks for stakeholder-differentiated impact that can actually answer a CFO’s question.

The CHAI Leadership Summit brought together practitioners already leading this work. The foundational layer is in place at many institutions. The next challenges of AI governance Year 2 are operational depth, and they’re solvable with the same investment organizations have already committed to getting the first generation of AI governance infrastructure right.