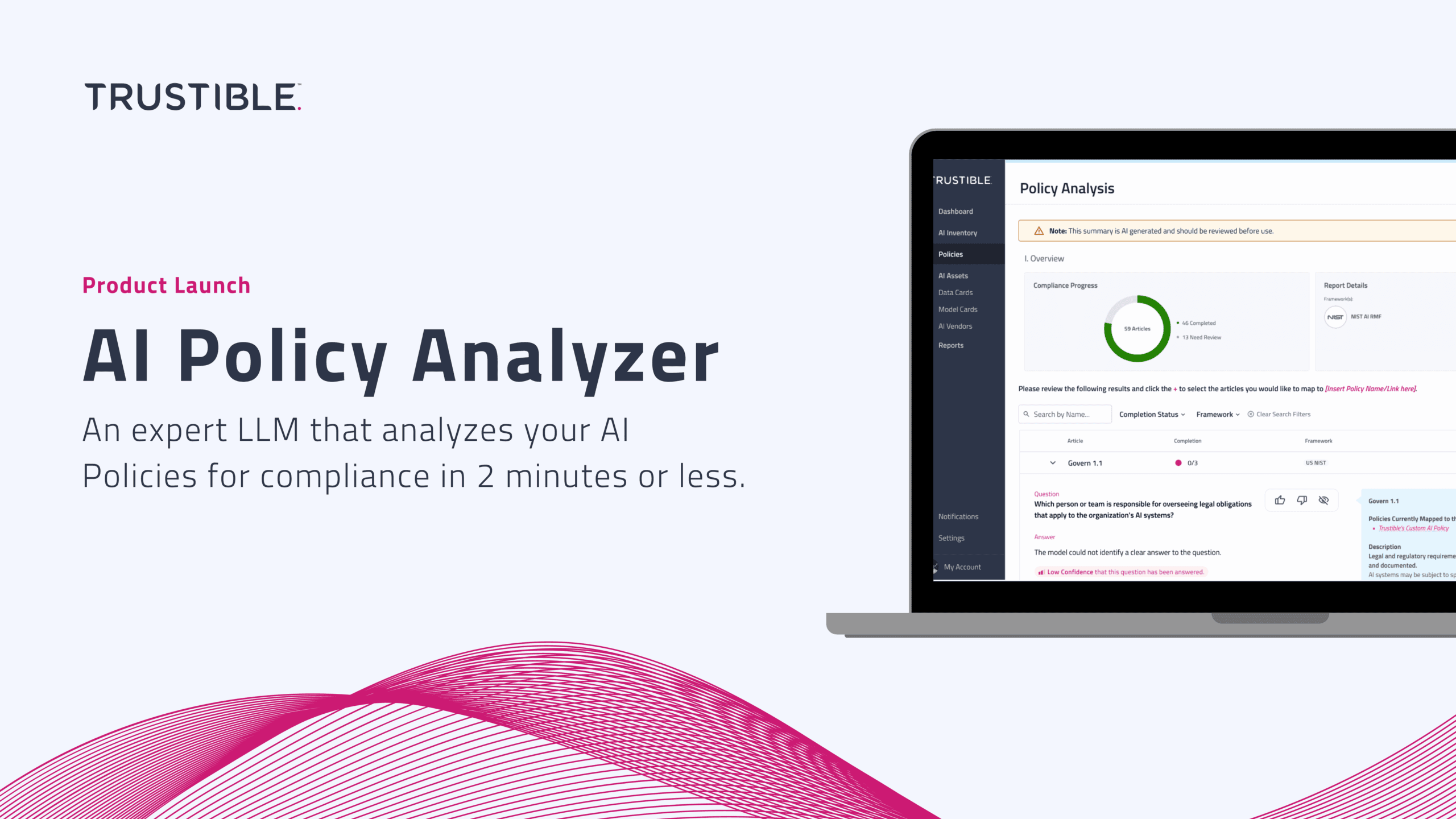

Today, we’re excited to announce that we are launching our latest AI capability: Policy Analyzer.

For enterprise AI leaders and governance experts, developing Policies to guide the appropriate use and risk mitigation of AI can be a daunting task. Moreover, understanding whether that policy is compliant with AI regulations and standards can be costly, time-consuming, and overwhelming. Trustible’s AI Policy Analyzer is an expert AI system designed to simplify this process, providing an automated analysis of your existing AI policies in just minutes.

How It Works

- Upload Your Policy: Upload your existing AI Policy document or select one of Trustible’s Policy Templates.

- Select a Compliance Framework: Choose the relevant compliance framework or regulation, such as the EU AI Act, NIST AI Risk Management Framework, or ISO/IEC 42001.

- Automated Analysis: Our AI Policy Analyzer interrogates the uploaded Policy against structured criteria aligned with each compliance requirement.

- Gap Analysis: Receive a detailed gap analysis within 2-minutes or less highlighting areas needed to achieve compliance, with tools and features to help you address the gaps.

As an enterprise AI leader, you’re under constant pressure to innovate while ensuring that your AI initiatives are compliant and aligned to your organization’s principles. Policies are an important step forward in balancing innovation with risk management. But understanding what should be included in your Policies can be challenging. Moreover, keeping policies up-to-date with fast-evolving AI regulations and best practices is a never-ending task. The AI Policy Analyzer provides an automated solution to this complex problem.

A leading global financial services company recently used Trustible’s AI Policy Analyzer to evaluate their AI governance framework. Within minutes, the tool identified addressable gaps in compliance with the EU AI Act. This rapid insight allowed their team to make quick adjustments, avoiding legal fees and potential regulatory fines.

Value to Our Customers

- Save Time: Leverage an expert AI-system to conduct comprehensive policy analysis in minutes, not weeks.

- Save Money: Reduce reliance on external consultants for policy development & evaluation.

- Reduce Risk: Ensure your AI policies are robust and compliant with existing regulations and international standards, minimizing potential liabilities.

- Stay Current: Keep up with the latest best practices and regulatory requirements, ensuring your policies are guiding your organization in the right way.

The AI Policy Analyzer offers enterprise AI leaders and governance experts a powerful, efficient, and user-friendly solution for managing AI compliance. The system is best used to augment your existing policy development efforts and is not intended to replace the need for legal counsel.

Ready to learn more?