AI Governance Best Practices for Healthcare Systems and Pharmaceutical Companies

In the rapidly evolving landscape of healthcare, AI promises to revolutionize patient care, but it also brings significant risks. From algorithmic bias to data privacy breaches, the stakes are high. Effective AI governance is essential to harness the benefits of these technologies while safeguarding patient safety and ensuring compliance with regulations. This article delves into the critical challenges healthcare systems and pharmaceutical companies face, offering practical solutions and best practices for implementing trustworthy AI. Discover how to navigate the complexities of AI in healthcare and protect your organization from potential pitfalls.

Introducing the Trustible AI Governance Insights Center

At Trustible, we believe AI can be a powerful force for good, but it must be governed effectively to align with public benefit. Introducing the Trustible AI Governance Insights Center, a public, open-source library designed to equip enterprises, policymakers, and consumers with essential knowledge and tools to navigate AI’s risks and benefits. Our comprehensive taxonomies cover AI Risks, Mitigations, Benefits, and Model Ratings, providing actionable insights that empower organizations to implement robust governance practices. Join us in transforming the conversation around trusted AI into tangible, measurable outcomes. Explore the Insights Center today!

When Zero Trust Meets AI Governance: The Future of Secure and Responsible AI

Artificial intelligence is rapidly reshaping the enterprise security landscape. From predictive analytics to generative assistants, AI now sits inside nearly every workflow that once belonged only to humans. For CIOs, CISOs, and information security leaders, especially in regulated industries and the public sector, this shift has created both an opportunity and a dilemma: how do you innovate with AI at speed while maintaining the same rigorous trust boundaries you’ve built around users, devices, and data?

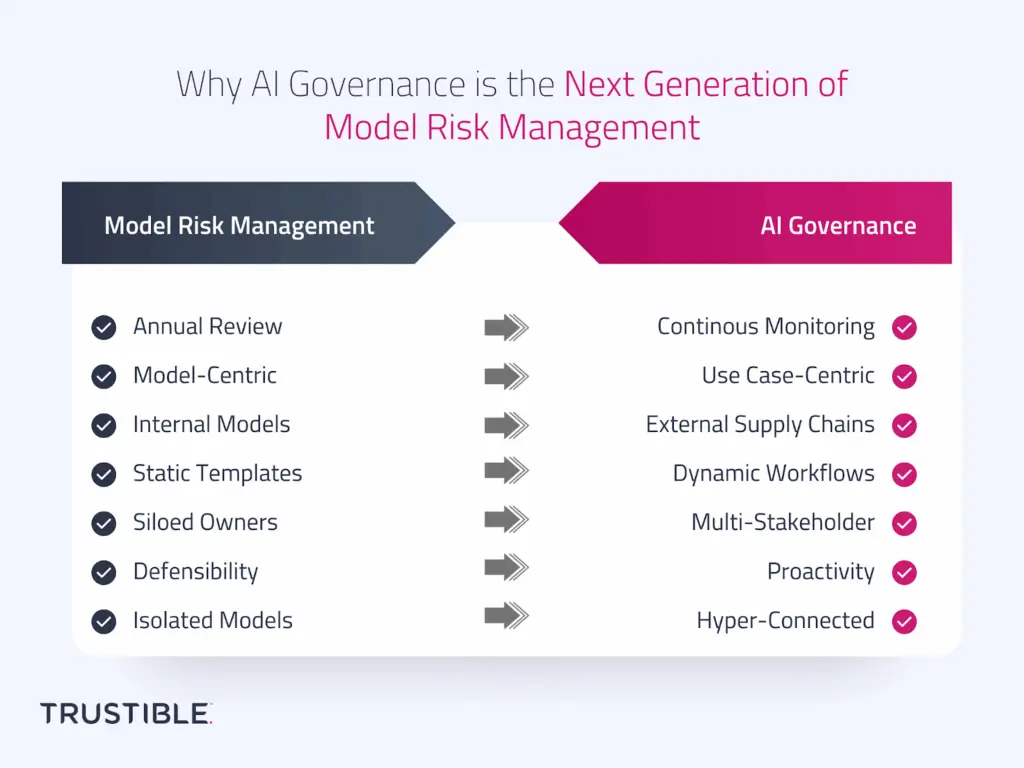

Why AI Governance is the Next Generation of Model Risk Management

For decades, Model Risk Management (MRM) has been a cornerstone of financial services risk practices. In banking and insurance, model risk frameworks were designed to control the risks of internally built, rule-based, or statistical models such as credit risk models, actuarial pricing models, or stress testing frameworks. These practices have served regulators and institutions well, providing structured processes for validation, monitoring, and documentation.

Should the EU “Stop the Clock” on the AI Act?

The European Union (EU) AI Act became effective in August 2024, after years of negotiations (and some drama). Since entering into force, the AI Act’s implementation has been somewhat bumpy. The initial set of obligations for general-purpose AI (GPAI) providers took effect in August 2025 but the voluntary Code of Practice faced multiple drafting delays. The finalized version was released with less than a month to go before GPAI providers needed to comply with the law.

Trustible and Carahsoft Announce Strategic Partnership to Bring AI Governance Platform to Government Agencies

Collaboration Enables Streamlined Access to AI Governance Solutions for the Public Sector ARLINGTON, Va., and RESTON, Va. – August 38, 2025 – Trustible, a leader in AI governance, risk and compliance, and Carahsoft Technology Corp., The Trusted Government IT Solutions Provider®, today announced a strategic partnership. Under the agreement, Carahsoft will serve as Trustible’s Master Government […]

Building Trust in Enterprise AI: Insights from Trustible, Schellman, and Databricks

AI is rapidly reshaping the enterprise landscape, but organizations face growing pressure from regulators, stakeholders, and customers to ensure these systems are trustworthy, ethical, and well-governed. To help unpack this evolving space, Trustible, Schellman, and Databricks co-hosted a webinar on how AI governance frameworks, standards, and compliance practices can become strategic tools to accelerate AI adoption.

What Does the Global Pause on AI Laws Mean for AI Governance?

The global AI regulatory landscape has taken a completely new direction in just one year. The US was previously leading the way on AI safety, attempting to work with like minded countries on building a responsible AI ecosystem. Yet since January 2025, the Trump Administration swiftly shifted the narrative by focusing on AI innovation and pausing […]

Trustible Launches Global Partner Program to Unite the AI Governance Ecosystem

New alliance connects technology, services, and channels to embed governance across the AI lifecycle, accelerating safe adoption for organizations Today, I’m excited to announce the global launch of the Trustible Partner Program. This ecosystem is purpose-built to weave AI governance through every stage of the AI lifecycle. Our program unites technology alliances, system integrators, resellers, […]