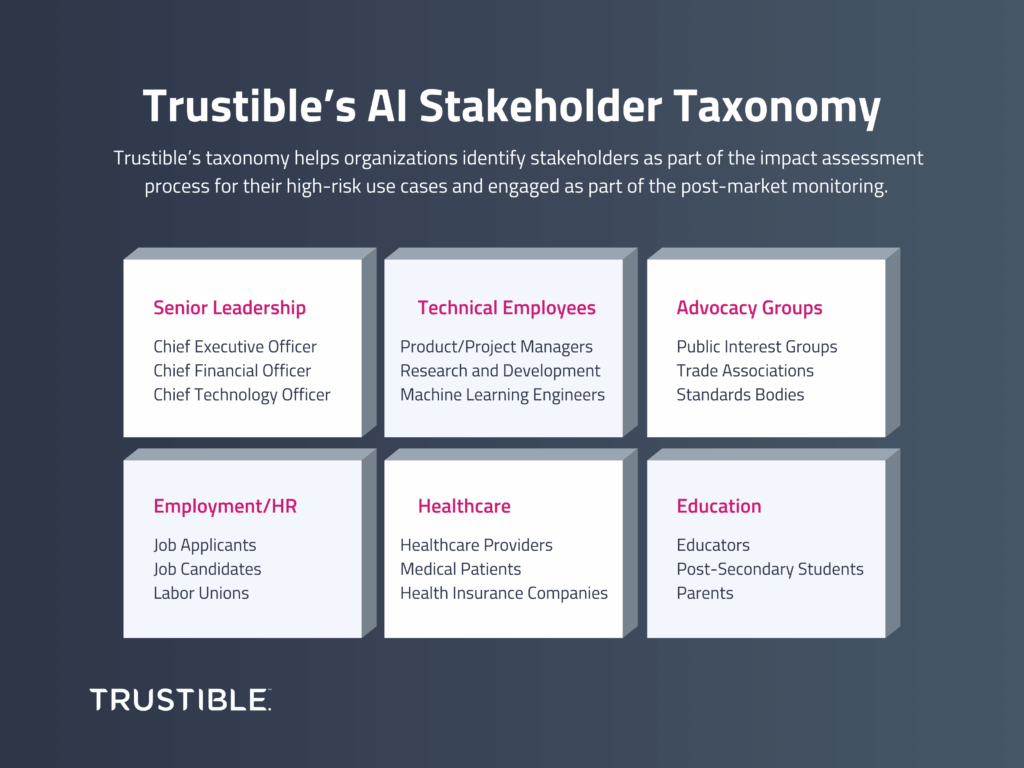

Understanding AI Stakeholders with Trustible’s AI Stakeholder Taxonomy

Trustible developed an AI Stakeholder Taxonomy that can help organizations easily identify stakeholders as part of the impact assessment process for their high-risk use cases

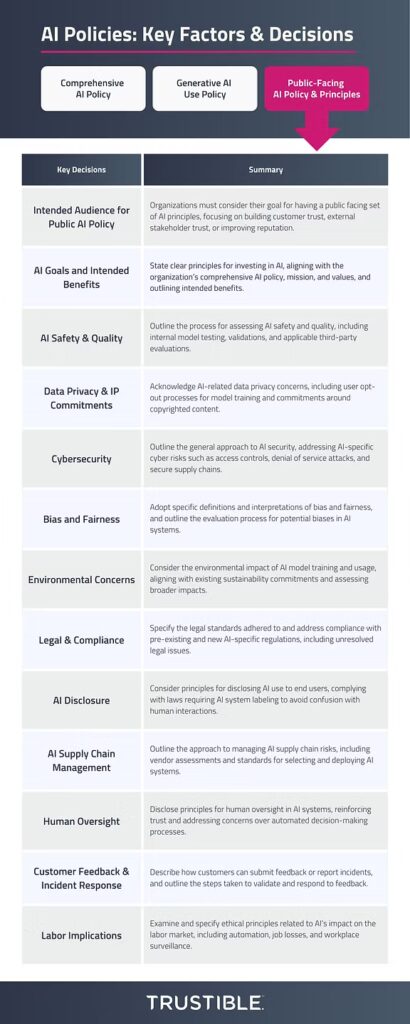

AI Policy Series 3: Drafting Your Public AI Principles Policy

In our final blog post of this AI Policy series (see Comprehensive AI Policy and AI Use Policy guidance posts here), we want to explore what organizations should make available to the public about their use of AI. According to recent research by Pew, 52 percent of Americans feel more concerned than excited by AI. This data demonstrates that, while organizations may understand or realize the value of AI, their users and customers may harbor some skepticism. Policymakers and large AI companies have sought to address public concerns, albeit in their own ways.

AI Policy Series 1: Drafting Your Comprehensive AI Policy

As organizations increase their adoption of AI, governance leaders are looking to put in place policies that ensure their AI deployment aligns with their organization’s principles, complies with regulatory standards, and mitigates potential risks. But where to start in developing your policies can oftentimes be overwhelming. Let’s start with some important context. AI Policies break […]

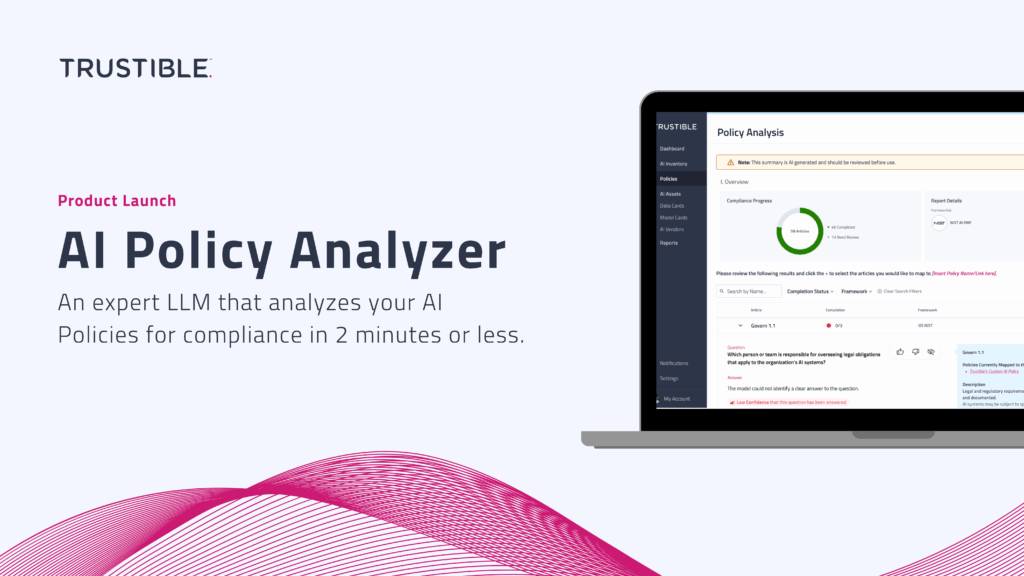

Product Launch: Trustible’s AI Policy Analyzer

For enterprise AI leaders and governance experts, developing Policies to guide the appropriate use and risk mitigation of AI can be a daunting task. Moreover, understanding whether that policy is compliant with AI regulations and standards can be costly, time-consuming, and overwhelming. Trustible’s AI Policy Analyzer is an expert AI system designed to simplify this process, providing an automated analysis of your existing AI policies in just minutes.

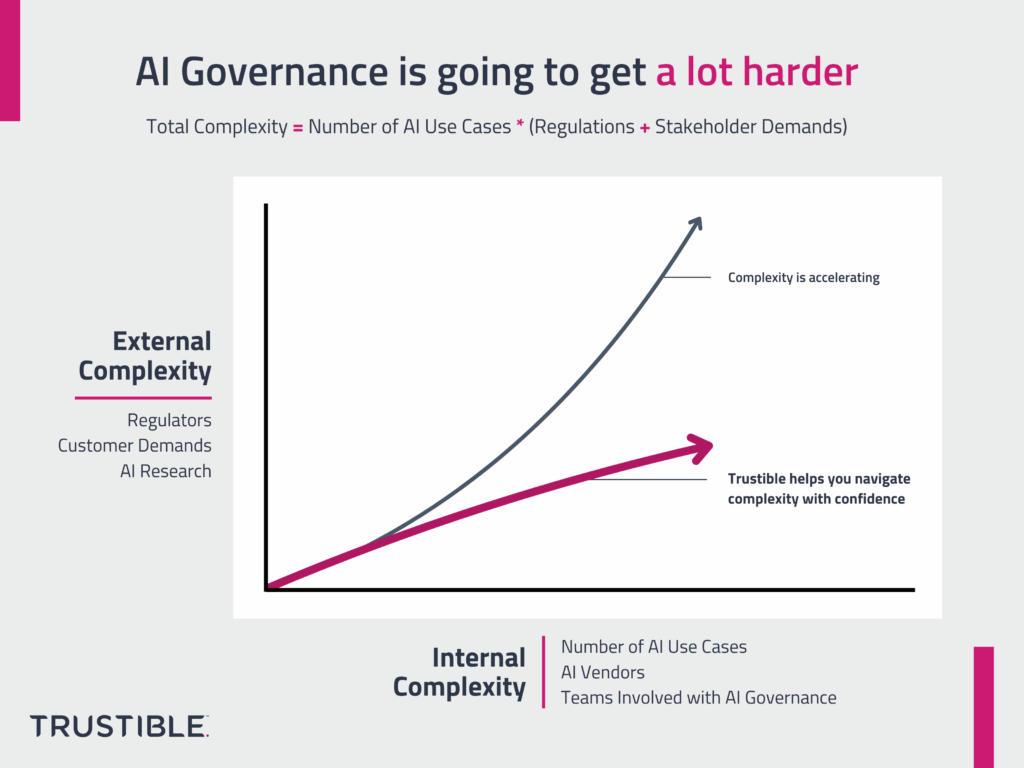

Enhancing the Effectiveness of AI Governance Committees

Organizations are increasingly deploying artificial intelligence (AI) systems to drive innovation and gain competitive advantages. Effective AI governance is crucial for ensuring these technologies are used ethically, comply with regulations, and align with organizational values and goals. However, as the use of AI and AI regulations become more pervasive, so does the complexity of managing these technologies responsibly.

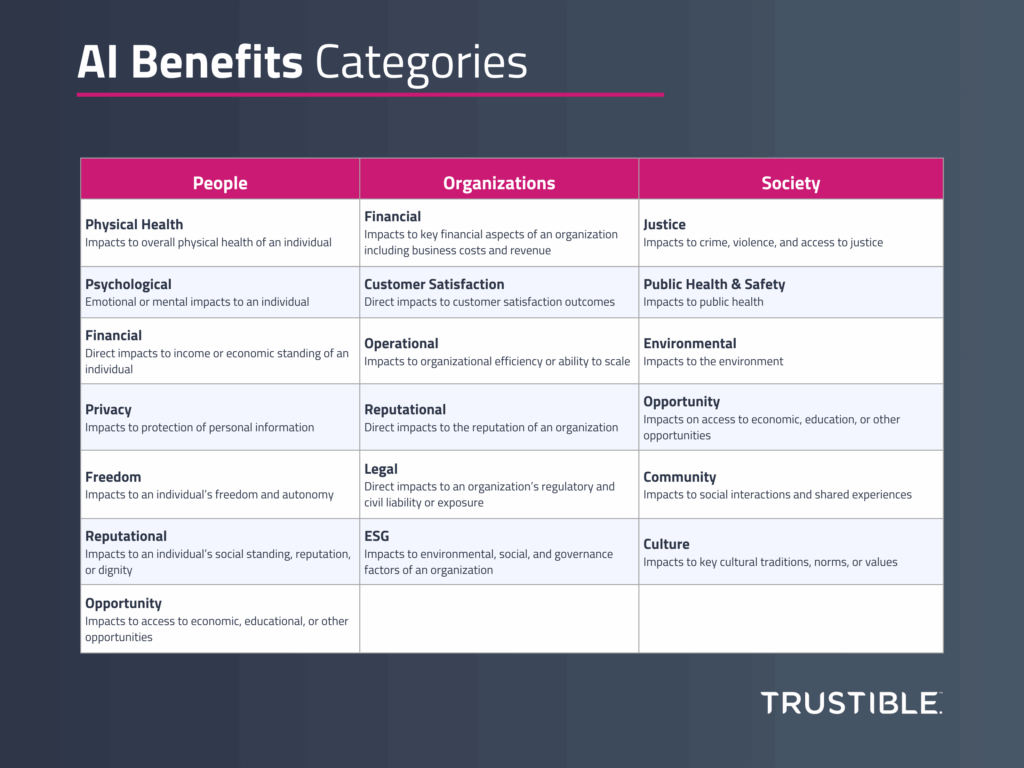

A Framework for Measuring the Benefits of AI

Introduction Significant research has been invested in studying AI risks, a response to the rapid pace of deployment of highly capable AI models across a wide variety of use cases. Over the last year, governments around the world have established AI Safety institutes tasked with developing methodologies to assess the impact and probability of various […]

Trustible Announces New Model Transparency Ratings to Enhance AI Model Risk Evaluation

Organizational leaders are looking to better understand what AI models may be best fit for a given use case. However, limited public transparency on these systems makes this evaluation difficult.

In response to the rapid development and deployment of general-purpose AI (GPAI) models, Trustible is proud to introduce its research on Model Transparency Ratings – offering a comprehensive assessment of transparency disclosures of the top 21 Large Language Models (LLMs).

Why AI Governance is going to get a lot harder

AI Governance is hard as it involves collaboration across multiple teams and an understanding of a highly complex technology and its supply chains. It’s about to get even harder. The complexity of AI governance is growing along 2 different dimensions at the same time – both of them are poised to accelerate in the coming […]

Operationalizing AI Governance in Insurance

Federal regulators have largely focused on issuing guidance and initiating inquiries into AI, whereas state regulators have taken a more proactive stance, addressing AI’s unique challenges within sectors such as insurance. The New York Department of Financial Services released a draft guidance letter proposing standards for identifying, measuring, and mitigation potential bias from use of […]