Trustible Selected for Google for Startups Latino Founders Fund

Trustible is thrilled to announce that it has been selected as a recipient of the prestigious Google for Startups Latino Founders Fund. This funding from Google is a testament to Trustible’s innovation, growth potential, and continued leadership in the field of AI governance.

AI Policy Series 1: Drafting Your Comprehensive AI Policy

As organizations increase their adoption of AI, governance leaders are looking to put in place policies that ensure their AI deployment aligns with their organization’s principles, complies with regulatory standards, and mitigates potential risks. But where to start in developing your policies can oftentimes be overwhelming.

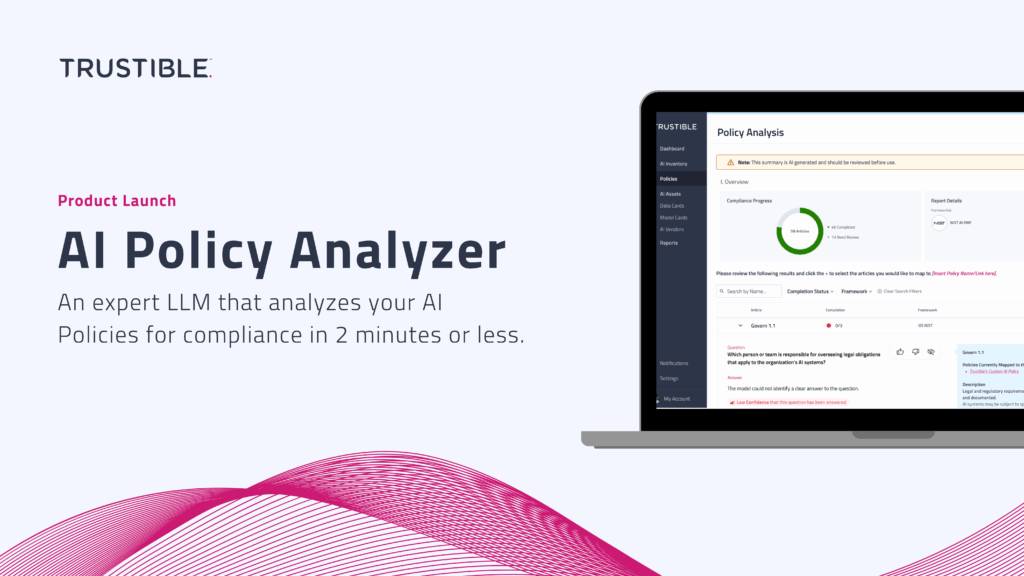

Product Launch: Trustible’s AI Policy Analyzer

For enterprise AI leaders and governance experts, developing Policies to guide the appropriate use and risk mitigation of AI can be a daunting task. Moreover, understanding whether that policy is compliant with AI regulations and standards can be costly, time-consuming, and overwhelming. Trustible’s AI Policy Analyzer is an expert AI system designed to simplify this process, providing an automated analysis of your existing AI policies in just minutes.

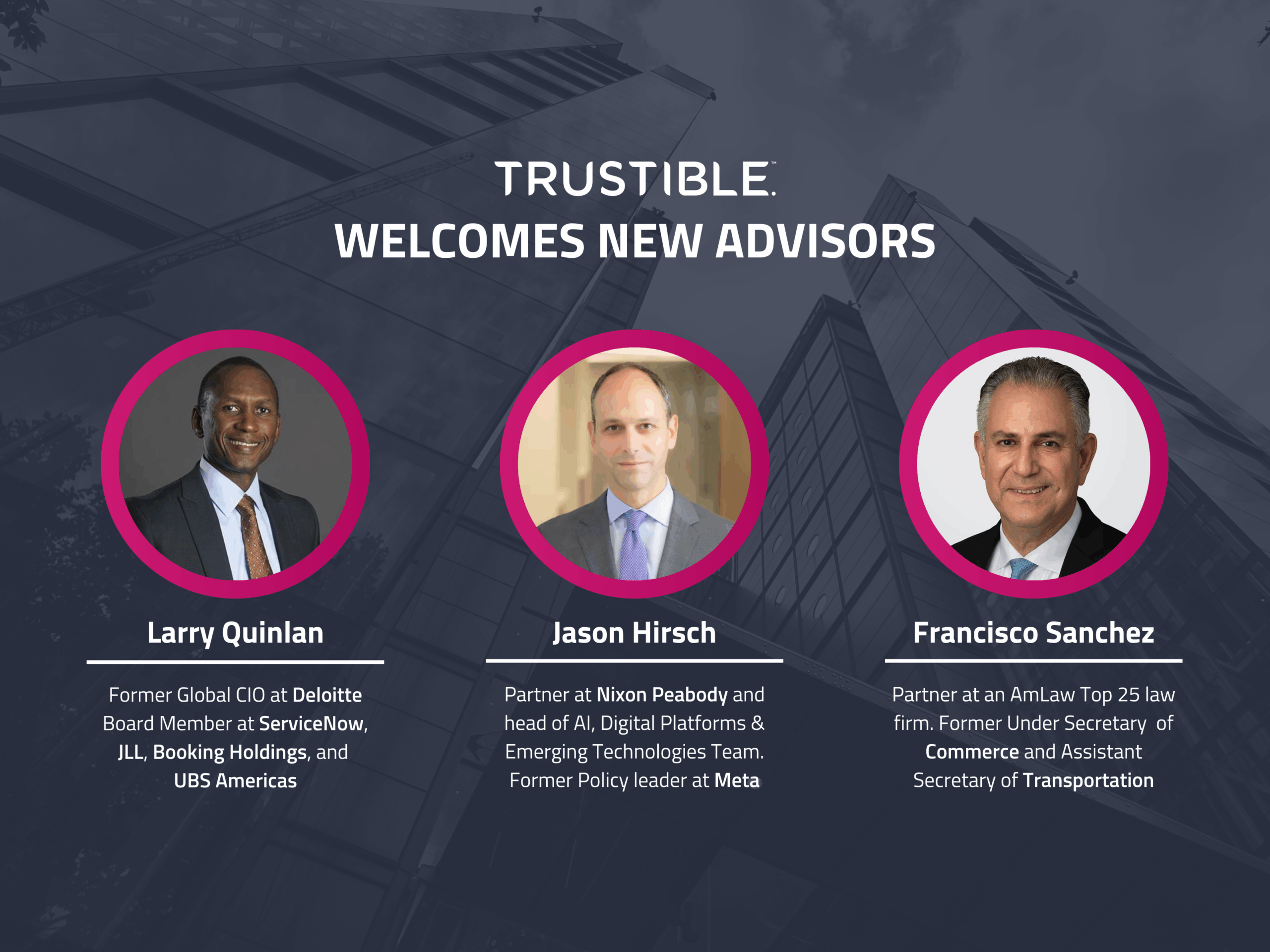

Trustible Welcomes New Advisors to Strengthen Enterprise and Legal Expertise in AI

We are thrilled to announce the addition of three new members to the Trustible Advisory Board: Larry Quinlan, Jason D. Hirsch, and Francisco Sánchez. Their deep expertise in AI, enterprise technology, regulatory strategy, and product counseling will guide Trustible customers and leadership on global challenges at the intersection of technology, law, and government policy.

Everything you need to know about Colorado SB 205

On May 17, 2024, Colorado Governor Jared Polis signed the Consumer Protection for Artificial Intelligence (SB 205) into law, the first comprehensive state AI law that imposes rules for certain high risk AI systems. The law requires that AI used to support ‘consequential decisions’ for certain use cases should be treated as ‘high risk’ and will be subject to a range of risk management and reporting requirements. The new rules will come into effect on February 1, 2026.

Enhancing the Effectiveness of AI Governance Committees

Organizations are increasingly deploying artificial intelligence (AI) systems to drive innovation and gain competitive advantages. Effective AI governance is crucial for ensuring these technologies are used ethically, comply with regulations, and align with organizational values and goals. However, as the use of AI and AI regulations become more pervasive, so does the complexity of managing these technologies responsibly.

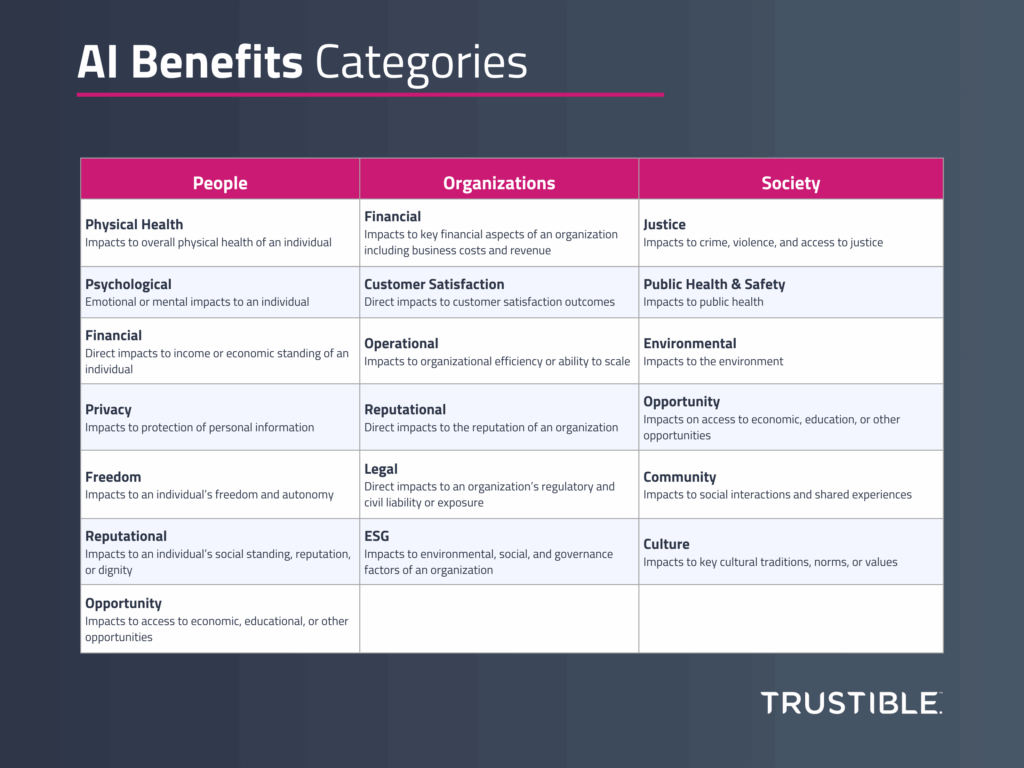

A Framework for Measuring the Benefits of AI

Introduction Significant research has been invested in studying AI risks, a response to the rapid pace of deployment of highly capable AI models across a wide variety of use cases. Over the last year, governments around the world have established AI Safety institutes tasked with developing methodologies to assess the impact and probability of various […]

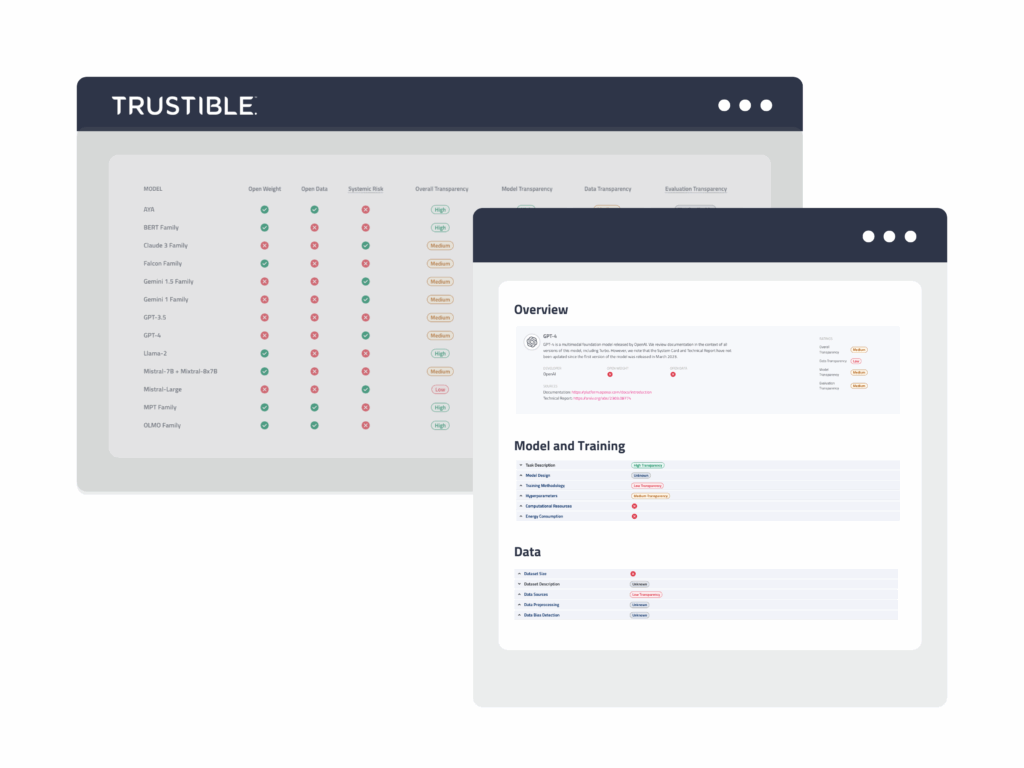

Trustible Announces New Model Transparency Ratings to Enhance AI Model Risk Evaluation

Organizational leaders are looking to better understand what AI models may be best fit for a given use case. However, limited public transparency on these systems makes this evaluation difficult.

In response to the rapid development and deployment of general-purpose AI (GPAI) models, Trustible is proud to introduce its research on Model Transparency Ratings – offering a comprehensive assessment of transparency disclosures of the top 21 Large Language Models (LLMs).

Inside Trustible’s Methodology for Model Transparency Ratings

The speed at which new general purpose AI (GPAI) models are being developed is making it difficult for organizations to select which model to use for a given AI use case. While a model’s performance on task benchmarks, deployment model, and cost are primarily used, other factors, including the data sources, ethical design decisions, and regulatory risks of a model must be accounted for as well. These considerations cannot be inferred from a model’s performance on a benchmark, but are necessary to understand whether using a specific model is appropriate for a given task or legal to use within a jurisdiction.