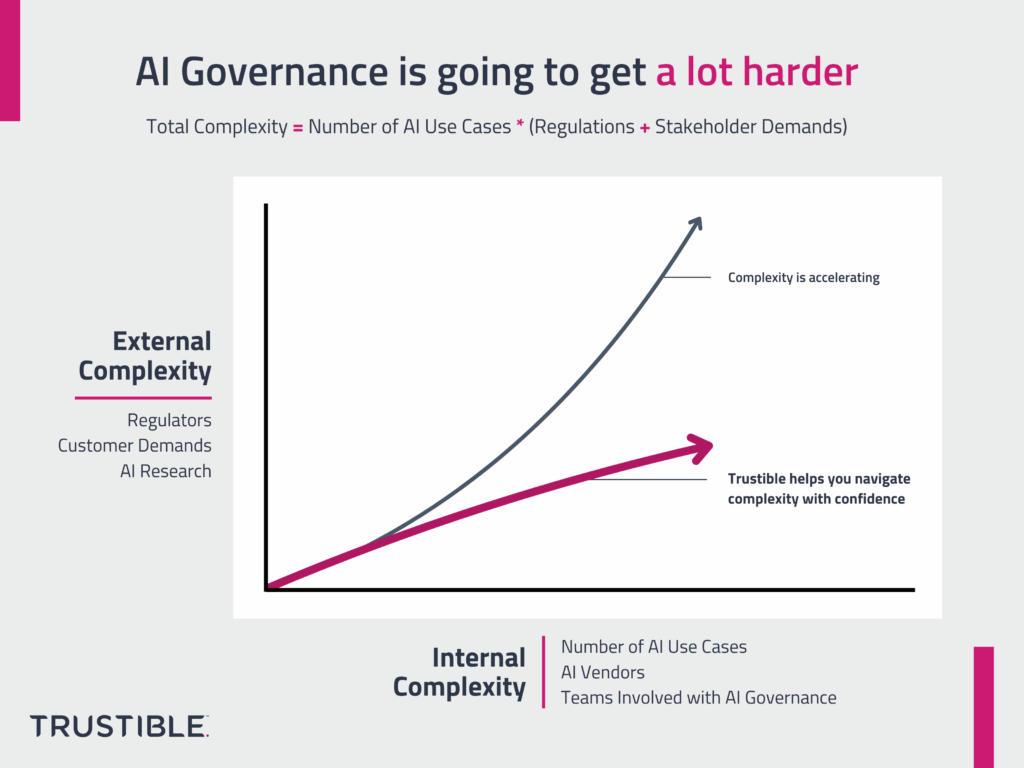

Why AI Governance is going to get a lot harder

AI Governance is hard as it involves collaboration across multiple teams and an understanding of a highly complex technology and its supply chains. It’s about to get even harder. The complexity of AI governance is growing along 2 different dimensions at the same time – both of them are poised to accelerate in the coming […]

Analysis – How Trustible Helps Organizations Comply With The EU AI Act

The EU AI Act sets a global precedent in AI regulation, emphasizing human rights in AI development and implementation of AI systems. While the eventual law will directly apply to EU countries, its extraterritorial reach will impact global businesses in profound ways. Global businesses producing AI-related applications or services that either impact EU citizens or supply EU-based companies will be responsible for complying with the EU AI Act. Failure to comply with the Act can result in fines up to 7% of global turnover or €35m for major violations, with lower penalties for SMEs and startups.

Analysis – Mapping the Requirements of NIST AI RMF, ISO 42001, and the EU AI Act

Navigating the evolving and complex landscape for AI governance requirements can be a real challenge for organizations. Previously, Trustible created this comprehensive cheat sheet comparing three important compliance frameworks: the NIST AI Risk Management Framework, ISO 42001, and the EU AI Act. This easy to understand visual maps the similarities and differences between these frameworks, […]

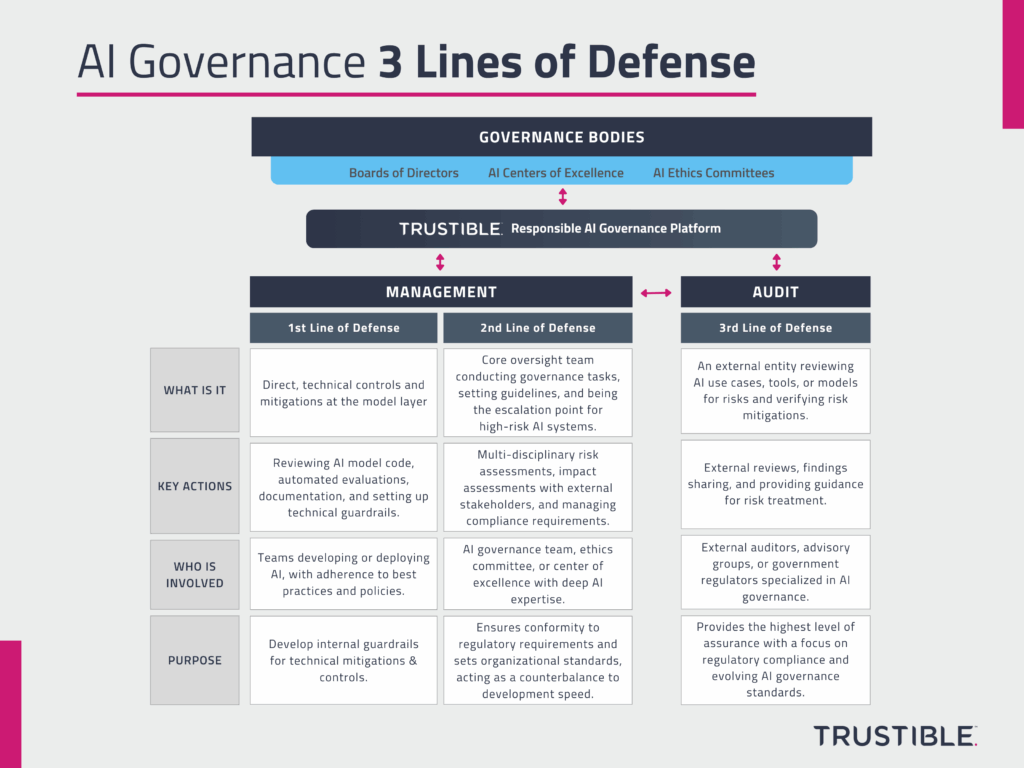

3 Lines of Defense for AI Governance

AI Governance is a complex task as it involves multiple teams across an organization, working to understand and evaluate the risks of dozens of AI use cases, and managing highly complex models with deep supply chains. On top of the organizational and technical complexity, AI can be used for a wide range of purposes, some of which are relatively safe (e.g. email spam filter), while others pose serious risks (e.g. medical recommendation system). Organizations want to be responsible with their AI use, but struggle to balance innovation and adoption of AI for low risk uses, with oversight and risk management for high risk uses. To manage this, organizations need to adopt a multi-tiered governance approach in order to allow for easy, safe experimentation from development teams, with clear escalation points for riskier uses.

How State Executive Orders are Shaping U.S. AI Policy

States continue to pave the way forward on AI policy. As we have previously discussed, 2023 saw a flurry of activity from state legislatures on various AI-related legislation. However, state legislatures were not alone in attempts to implement greater oversight for AI technologies. Over the past year, the Governors of California, Maryland, New Jersey, Oregon, […]

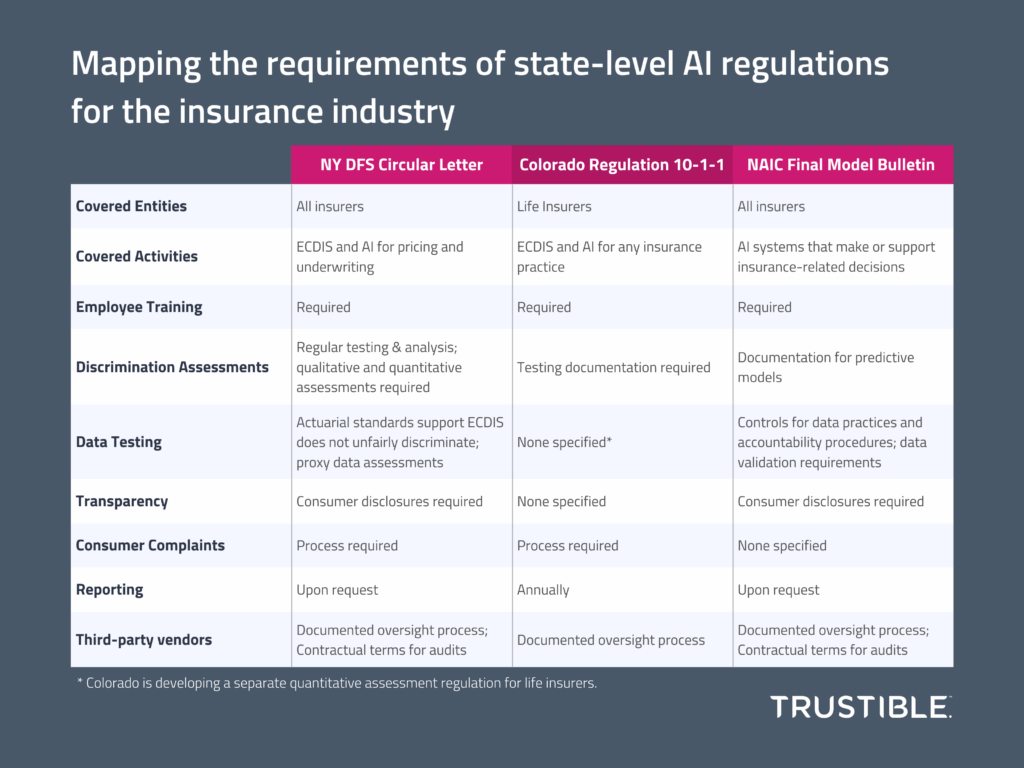

Operationalizing AI Governance in Insurance

Federal regulators have largely focused on issuing guidance and initiating inquiries into AI, whereas state regulators have taken a more proactive stance, addressing AI’s unique challenges within sectors such as insurance. The New York Department of Financial Services released a draft guidance letter proposing standards for identifying, measuring, and mitigation potential bias from use of […]

An Applied Ethical AI Framework

In our new white paper, we discuss how AI governance professionals, and their organizations, can apply an actionable and flexible framework for evaluating ethical decisions for AI systems. Many organizations talk about Ethical AI, but many struggle to define it clearly. They often settle on sets of high level principles or values, but then struggle […]

Product Launch: Introducing ISO 42001

Today, we’re excited to announce that we are now offering customers access to ISO 42001 Standard within the Trustible platform – becoming the first AI governance company to offer the standard on its platform. ISO 42001 is positioned to be the first global auditable standard designed to foster trustworthiness in AI systems by setting a […]

Is it AI? How different laws & frameworks define AI

The race to regulate AI is promulgating an array of approaches from light-touch to highly prescriptive regimes. Governments, industry, and standards-setting bodies are rushing to weigh-in on what guardrails exist for AI developers and deployers. Yet, often times the regulatory conversations take for granted the answer to one fundamental question: What is AI? At its […]