Trustible Announces Participation in Department of Commerce Consortium Dedicated to AI Safety

Trustible will be one of the leading AI stakeholders to help advance the development and deployment of safe, trustworthy AI under new U.S. Government safety institute (Washington, DC) – On February 8, 2024, Trustible announced that it joined some of the nation’s leading artificial intelligence (AI) stakeholders to participate in a Department of Commerce initiative to […]

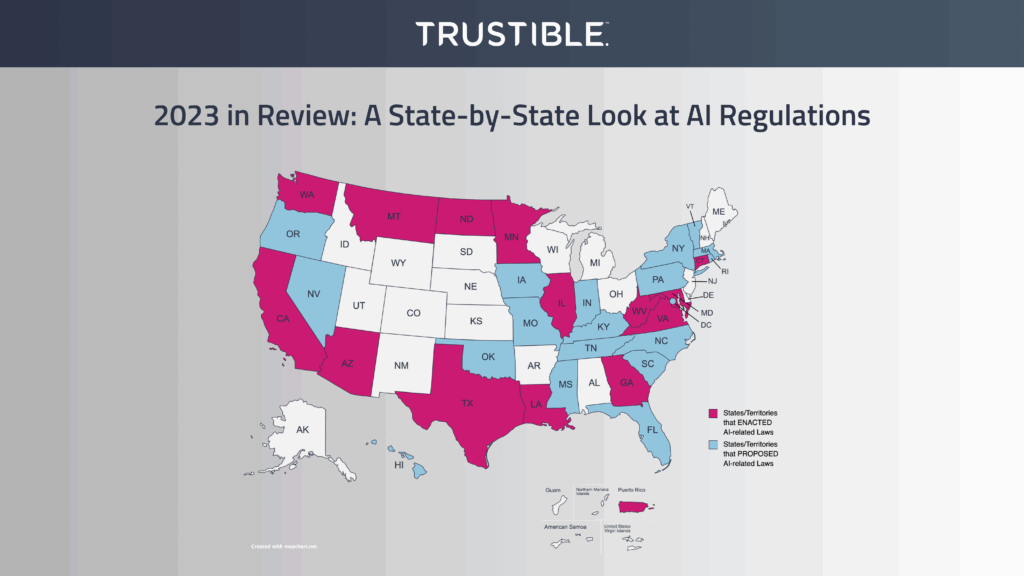

2023 in Review: A State-by-State Look at AI Regulations

In November 2022, Chat GPT brought the AI revolution straight into the hands of everyday consumers. However, if 2022 launched the proliferation and democratization of AI technology, 2023 can be remembered as the year in which policymakers tried to reign in AI. In the U.S., every branch of the federal government has weighed in on […]

Life After the Rite Aid Order: A Discussion on How the FTC is Shaking Up AI

AI incidents can have major implications for companies looking to develop and deploy AI. Oftentimes, organizations can be financially or reputationally harmed if the AI system performs harmful or unintended actions impacting people – furthering the need for hashtag #AIgovernance. The American drugstore chain RITE AID was recently banned by the Federal Trade Commission (FTC) from using AI facial recognition […]

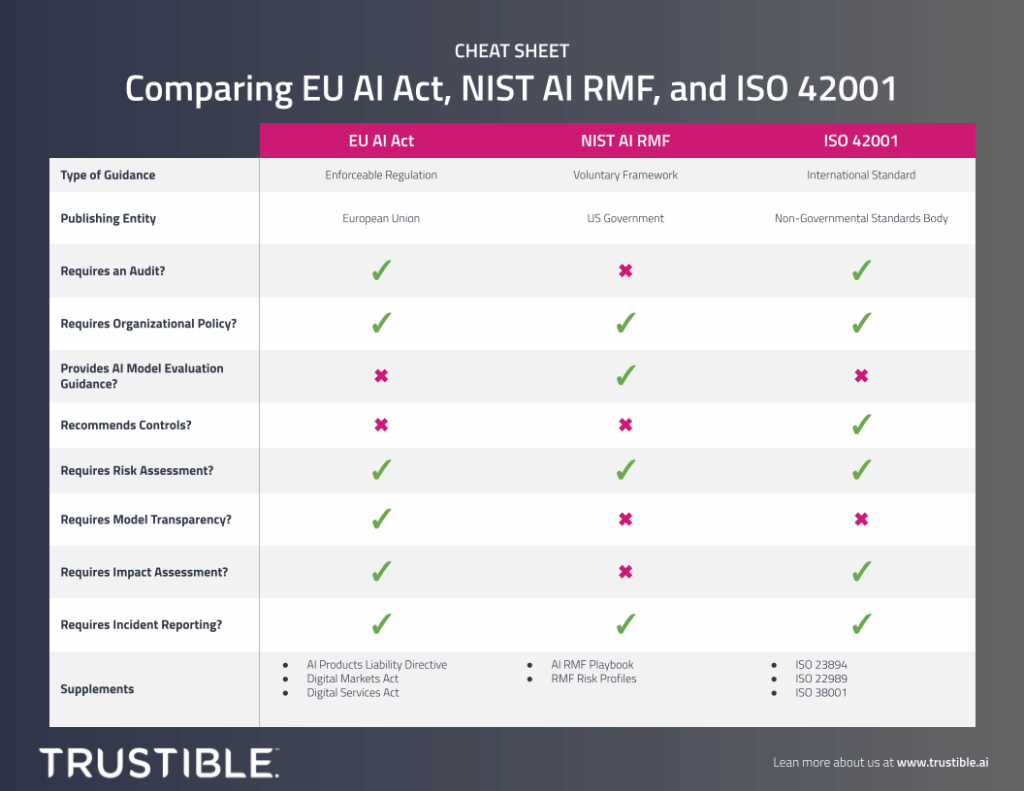

CHEAT SHEET: Comparing EU AI Act, NIST AI RMF, and ISO 42001

Navigating the evolving and complex landscape for AI governance requirements can be a real challenge for organizations. We’ve created this comprehensive cheat sheet comparing three important compliance frameworks: the EU AI Act, the NIST AI Risk Management Framework, and ISO 42001. This easy to understand visual maps the similarities and differences between these frameworks, providing […]

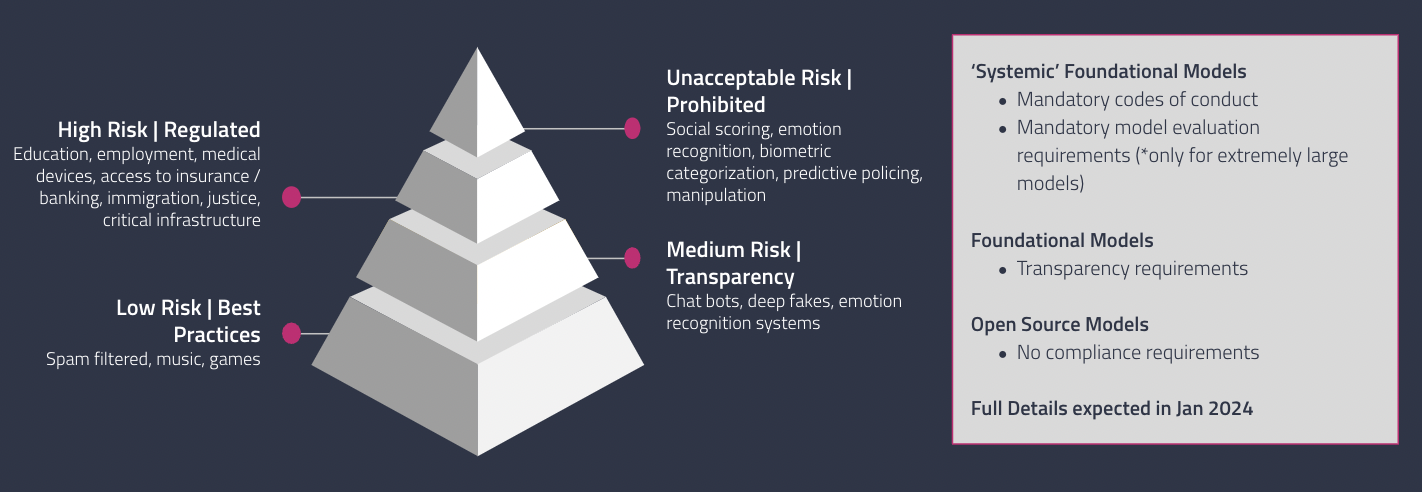

The EU AI Act Should Be A Wake-Up Call for American Companies

On December 9, 2023, European Union (EU) policymakers reached an agreement on the proposed Artificial Intelligence (AI) Act, which sets the stage for the EU to pass the AI Act as early as January 2024. The impending vote on the compromise legislation marks a significant development in the global AI regulatory landscape; one that American […]

Everything you need to know about the Colorado AI Life Insurance Regulation (Regulation 10-1-1)

What is Colorado Regulation-10-1-1 ? In July 2021, Governor Jared Polis signed SB 21-169 into law, which directed the Colorado Division of Insurance (CO DOI) to adopt risk management requirements that prevent algorithmic discrimination in the insurance industry. After two years and several revisions, a final risk management regulation for life insurance providers was officially […]

Everything you need to know about the NIST AI Risk Management Framework

What is the NIST AI RMF? The National Institute of Standards and Technology (NIST) Artificial Intelligence Risk Management Framework is a voluntary framework released in 2023 that helps organizations identify and manage the risks associated with development and deployment of Artificial Intelligence. It is similar in its intent and structure to the NIST Cybersecurity Framework […]

Privacy Pioneers: AI as the New Frontier

In our new research paper, we’ll discuss how privacy professionals, and their organizations, can take on AI governance — and what will happen if they don’t. Key findings include:

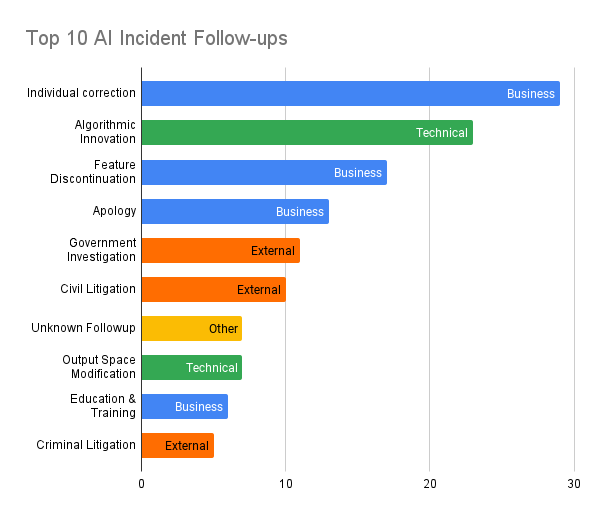

What to do when AI goes wrong?

AI systems have immense beneficial applications, however they also carry significant risks. Research into these risks, and the broader field of AI safety, hasn’t received nearly as much attention or investment until recently. For the longest time, there were no reliable sources of information about adverse events caused by AI systems for researchers to study. […]